Facebook reveals its Reporting Process [Infographic]

Looks like Facebook is becoming more open and transparent to its 900+ million users.

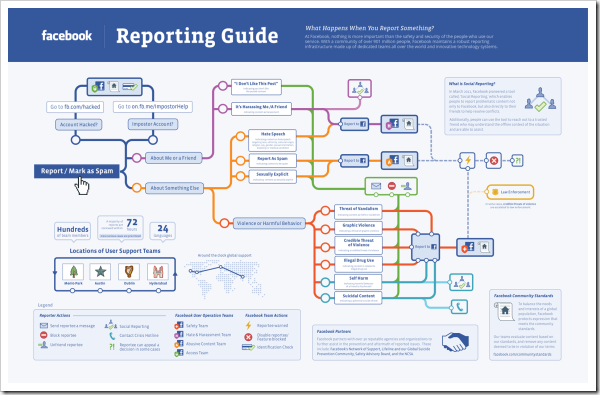

Facebook’s Safety team yesterday published an Infographic that offers a peek at how content on Facebook is classified and how the content is handled by various Facebook teams once a particular content is reported or flagged by Facebook users.

Facebook Reporting Guide

(Click on the infographic to zoom)

It is clear from this Infographic that Facebook has a robust reporting process in place especially for various forms of objectionable content that users post on Facebook. The various reporting teams work round the clock and across Geographies to ensure that content which violates the Facebook guidelines is removed at the earliest. (Note: This infographic is more complex than I thought, after 15 minutes of trying to understand I am still blank)

Facebook has also added new features when it came to user requests for content removal. i.e: a particular piece of content is not in violation of Facebook guidelines, however, other user wants the content to be removed (for whatever reasons). Although, Facebook will still not remove any such content by themselves, they have launched tools to allow people to directly engage with one another to better resolve their issues beyond simply blocking or unfriending another user.

Of particular note, is their social reporting tool that allows people to reach out to other users or trusted friends to help resolve the conflict or open a dialog about a piece of content.

Overall, I think it is nice to see that Facebook is getting more transparent about their internal processes and allowing users to know what is going behind the scenes.

Some questions surely pop-up in my mind though – Would this have happened in pre-IPO facebook era? Is Facebook under pressure now from their stakeholders to be more open and transparent?

Whats your take?

[Download the entire Facebook reporting Infographic from here]