The future of AI infrastructure may not be giant warehouse-sized data centers anymore. Instead, NVIDIA-powered mini AI data centers could soon appear inside homes, garages, and residential neighborhoods. The concept is gaining attention as startups and hardware companies look for faster and cheaper ways to meet exploding AI computing demand.

At the center of this shift is NVIDIA’s compact AI supercomputer platform called NVIDIA DGX Spark, a small yet extremely powerful AI system designed for developers, researchers, and enterprises. NVIDIA says the device delivers up to 1 petaflop of AI performance while fitting on a desk.

What Is A Mini AI Data Center?

Traditionally, AI models like ChatGPT run inside massive centralized data centers filled with thousands of GPUs consuming enormous amounts of electricity. However, growing AI demand has created serious problems:

- Power shortages

- Delayed construction timelines

- Rising infrastructure costs

- Community opposition to large data centers

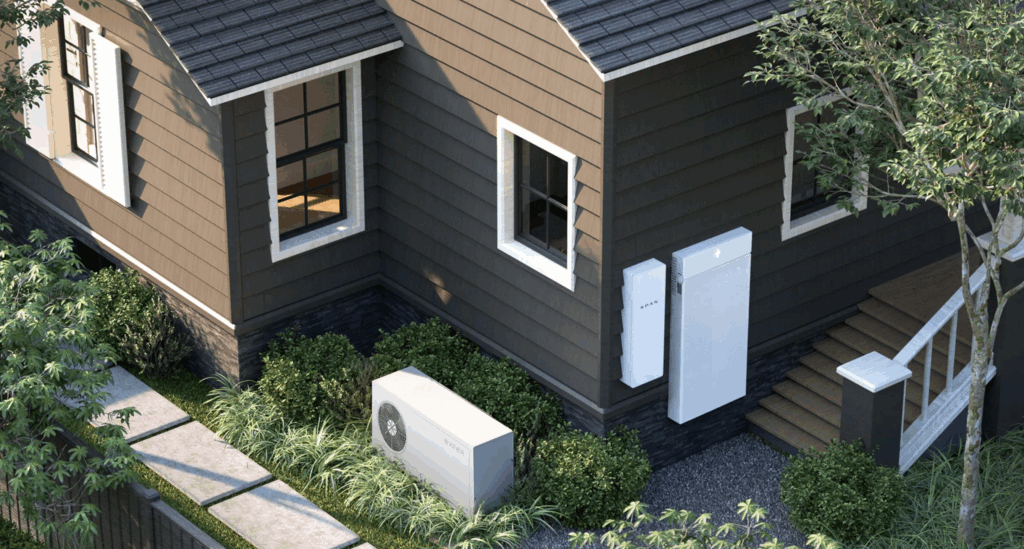

To solve this, companies are now exploring “distributed AI infrastructure,” where smaller AI compute nodes are spread across homes and buildings instead of concentrated in one giant facility.

Startup Span, for example, is developing compact AI server units called XFRA nodes that can be installed in residential areas. The company claims thousands of these small nodes together could deliver the same compute power as a traditional 100-megawatt AI data center.

NVIDIA’s DGX Spark Could Power This Revolution

NVIDIA’s DGX Spark is emerging as a key technology behind this idea. Originally introduced as “Project DIGITS,” the system combines NVIDIA’s Grace Blackwell architecture with 128GB unified memory and support for AI models with up to 200 billion parameters locally.

The compact machine is roughly the size of a mini PC but delivers data center-class AI capabilities. NVIDIA says it is designed for:

- AI model development

- Autonomous AI agents

- Robotics applications

- Local AI inference

- Data science workloads

The system’s relatively low power usage compared to massive AI servers makes it attractive for decentralized deployments. Recent software updates have even reduced idle power consumption significantly.

Why Homes Could Become AI Infrastructure

The biggest challenge in AI today is electricity availability. Building giant AI campuses requires huge amounts of grid power, cooling systems, and land. Distributed home-based AI nodes could bypass many of these limitations by using existing residential electrical infrastructure.

NVIDIA executive Marc Spieler reportedly said leveraging existing powered locations “makes a lot of sense” because obtaining large power allocations for centralized data centers has become increasingly difficult.

This could eventually create a future where:

- Homes host AI compute nodes

- Homeowners earn income from spare electricity or cooling capacity

- AI workloads shift dynamically across neighborhoods

- Local AI reduces cloud dependency and latency

Still Early, But A Massive Shift May Be Starting

While the concept sounds futuristic, experts believe distributed AI infrastructure may become necessary as AI computing demand continues growing rapidly. Analysts estimate global AI power demand could soon exceed the capacity of many existing grids.

NVIDIA’s growing ecosystem around DGX Spark, combined with startups building neighborhood AI networks, suggests the industry is already preparing for a future beyond traditional mega data centers.